Chat Response Without Context

The Chat Response Without Context component enables chat functionality without relying on additional contextual data. Unlike its counterpart, Chat Response With Context, this version focuses on processing user prompts without considering previous interactions or embedded context.

Users can configure an LLM provider, define system prompts, specify topics, and manage chat history, while also setting word limits for summaries and chat history. This tool is ideal for scenarios where each interaction is independent and does not require context retention.

Overview

The Chat Response Without Context component provides a conversational interface between users and a Large Language Model (LLM) without requiring external knowledge base integration. This component enables you to build interactive, multi-turn conversations in your Pipeline Builder applications while maintaining conversation history between interactions. It's designed for scenarios where the LLM's built-in knowledge is sufficient to address user queries and interactions.

How to use:

Key Terms

Term |

Definition |

|---|---|

LLM Provider |

The service provides the Large Language Model capabilities (such as OpenAI GPT, Anthropic Claude, Google Gemini, Llama). |

System Prompt |

Instructions that set the behavior, constraints, and personality of the AI assistant. It can include variables from previous pipeline data. |

Chat History |

The record of previous exchanges between the user and the AI assistant is maintained across multiple iterations. |

sessionId |

A unique identifier generated after the first interaction must be passed as an input parameter in subsequent component calls to maintain conversation continuity. |

Chat Summary |

A condensed version of the chat history that helps maintain context while managing token usage. |

When to Use

Use when you need to implement multi-turn conversations in your applications.

Ideal for collecting information from users through a conversational interface.

Useful for guided interactions where you want to ask specific, predefined questions.

Perfect for customer service applications, interactive forms, or user onboarding flows.

When you need to maintain conversation context across multiple interactions.

Component Configuration

Required Inputs

Input |

Description |

Data Type |

Example |

|---|---|---|---|

LLM Provider |

Select the Large Language Model provider (GPT, Claude, Gemini, Llama) you want to use for generating responses. |

LLMProvider |

|

System Prompt |

Instructions that define how the AI assistant should behave. You can reference variables from previous pipelines by clicking the + icon. Use this to set up predefined questions or to incorporate previous data. |

Text |

|

Prompt |

The user's message is to be sent to the LLM. This is typically connected to a user input field in your application. |

Text |

|

Topic |

A meaningful description of the conversation's intent or subject. Helps maintain conversation focus. |

Text |

|

Chat History |

The record of previous exchanges between the user and the AI assistant. This is maintained by the system. |

Text |

|

Word Limit for Chat Summary |

Maximum word count for the condensed summary of the chat history. This summary is automatically generated and updated as the conversation progresses. |

Integer |

|

Word Limit for Chat History |

Maximum word count for the complete chat history to be included in the context. This helps manage token usage while maintaining relevant conversation context. |

Integer |

|

sessionId |

For the first interaction, this field should be left empty. For subsequent interactions, you must pass the sessionId that was generated during the first interaction. This allows the system to retrieve the conversation history. |

Text |

|

How It Works

First Interaction:

You configure the LLM Provider and System Prompt (with any needed variables).

The user's input is received through the Prompt field.

You set appropriate word limits for chat summary and history.

The component sends this information to the selected LLM.

The LLM generates a response which is returned as output.

A unique SessionID is generated and included in the response.

The actual chat content is automatically maintained at the backend.

Subsequent Interactions:

The SessionID from the previous interaction must be passed as an input parameter.

The new user input is received through the Prompt field.

Using the provided SessionID, the system automatically retrieves the previous conversation history.

Based on the word limits specified, it maintains both a complete history and a summarized version.

All information is sent to the LLM along with the system prompt.

The LLM generates a contextually relevant response based on the entire conversation.

Chat History Management:

If the word limit for the chat summary is set (such as 200 words), the system automatically creates and maintains a condensed summary of the conversation.

If the word limit for chat history is set (such as 500 words), the system keeps the most recent conversation within this limit.

This balanced approach helps maintain context while managing token usage.

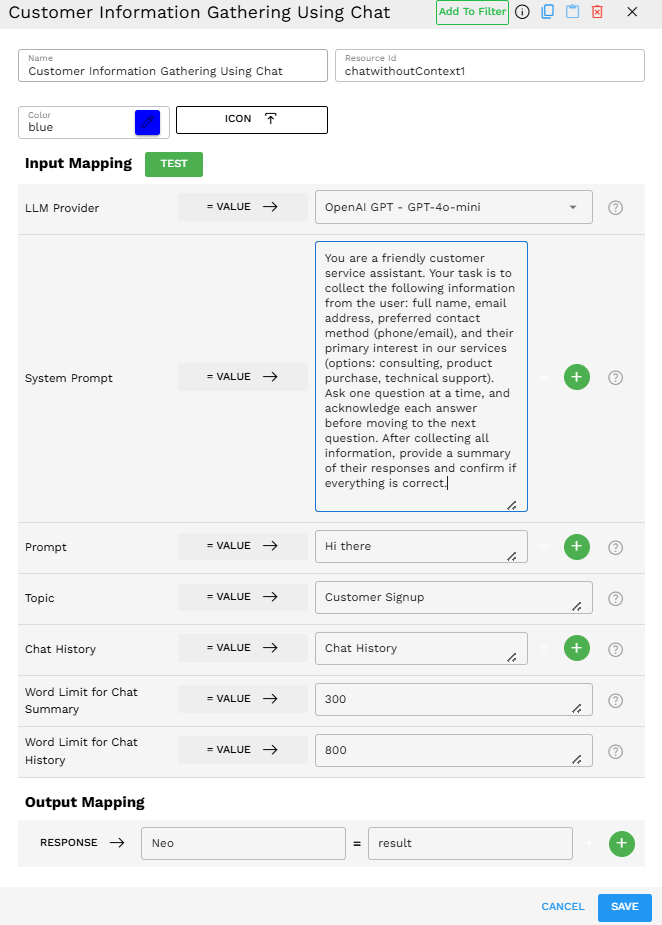

Example Use Case: Customer Information Collection

Scenario: Creating a conversational form to collect customer information for a service sign-up.

Configuration:

LLM Provider: Anthropic Claude

System Prompt: "You are a friendly customer service assistant. Your task is to collect the following information from the user: full name, email address, preferred contact method (phone/email), and their primary interest in our services (options: consulting, product purchase, technical support). Ask one question at a time, and acknowledge each answer before moving to the next question. After collecting all information, provide a summary of their responses and confirm if everything is correct."

Topic: "Customer Signup"

Word Limit for Chat Summary: 150

Word Limit for Chat History: 800

Conversation Flow:

First Iteration: User input: "Hi there" sessionId: [empty] AI response: "Hello! I'm here to help you sign up for our services. Could you please provide your full name?" [SessionId generated: session_abc123] Second Iteration: User input: "John Smith" sessionId: session_abc123 AI response: "Thank you, John Smith. Now, could you please share your email address?" Third Iteration: User input: "john.smith@example.com" sessionId: session_abc123 AI response: "Great! Your email (john.smith@example.com) has been noted. What's your preferred contact method? Would you prefer phone or email?" Fourth Iteration: User input: "Email please" sessionId: session_abc123 AI response: "Thanks for confirming your preference for email contact. Lastly, what's your primary interest in our services? Options are: consulting, product purchase, or technical support." Fifth Iteration: User input: "Product purchase" sessionId: session_abc123 AI response: "Thank you for providing all the requested information. Here's a summary of what you've shared: - Name: John Smith - Email: john.smith@example.com - Preferred contact: Email - Primary interest: Product purchase Is all of this information correct? If yes, your sign-up is complete and a team member contacts you soon. If anything needs correction, please let me know."

Best Practices

Design your System Prompt carefully - This is where you define the entire conversation flow and behavior.

Include specific instructions for handling each question in your system prompt if you are collecting structured information.

Reference pipeline variables in your system prompt to personalize the conversation with existing user data.

Configure appropriate word limits to balance context retention with efficiency.

Always pass the SessionID as an input parameter in subsequent interactions to maintain conversation continuity.

Test your conversation flows thoroughly with different user inputs to ensure robust handling of various scenarios.

Troubleshooting

Issue |

Possible Cause |

Solution |

|---|---|---|

LLM does not remember previous exchanges |

SessionID not passed as input parameter |

Ensure you are capturing the SessionID from the first response and passing it as an input parameter in subsequent calls. |

The conversation does not follow the intended flow |

System Prompt instructions are not specific enough |

Refine your system prompt with more explicit instructions and constraints. |

LLM asks the same questions repeatedly |

Word limits are too restrictive, causing a loss of conversation context |

Increase the word limit for chat summary and chat history. |

Variables not being incorporated |

Incorrect variable referencing in system prompt |

Ensure variables are properly referenced and exist in the pipeline. |

Limitations and Considerations

System Prompt Size - Complex instructions in the system prompt consume tokens. Balance detail with efficiency.

Response Variability - Despite explicit instructions, LLMs may occasionally deviate from the expected conversation flow.

Cost Considerations - Longer conversations with extensive history consume more tokens, increasing costs.