Generate Response Using MCP

The Generate Response using MCP Tools component enhances LLM capabilities by connecting to a Model Context Protocol (MCP) server. This enables access to specialized tools that extend the AI's processing abilities beyond standard text generation.

You can:

Configure an LLM provider

Connect to an MCP server

Define system prompts

Specify which tools the model can access

This component is ideal for scenarios requiring specialized processing capabilities such as data analysis, format conversion, or advanced text operations that benefit from dedicated tools.

Overview

The Generate Response using MCP Tools component can be used for

Tool-Enhanced Responses: Access specialized capabilities through MCP server tools.

Advanced Processing: Utilize tools for tasks that require specialized handling.

Flexible Configuration: Select which tools to enable for specific use cases.

Use Cases: Data analysis, specialized text processing, format conversion, and other tool-assisted tasks.

Key Terms

Term | Definition |

|---|---|

MCP | Model Context Protocol – A standardized way for large language models to interact with external tools and services. |

MCP Server | A server that implements the Model Context Protocol and provides access to various tools that extend the LLM's capabilities. |

MCP Tools | Specialized functions or capabilities available through an MCP server that an LLM can use to perform specific tasks. |

LLM Provider | The service that provides the Large Language Model capabilities (such as OpenAI GPT, Anthropic Claude, Google Gemini). |

System Prompt | Instructions that set the behavior, constraints, and personality of the AI assistant when using MCP tools. |

When to Use

Use Case | Description |

|---|---|

Data Analysis | When you need to analyze structured data using specialized tools beyond the LLM's native capabilities. |

Format Conversion | When content needs to be transformed between different formats using specialized conversion tools. |

Specialized Processing | When text or data requires specific types of processing that benefit from dedicated tools. |

Enhanced Information Retrieval | When you need to retrieve and process information using specialized search or extraction tools. |

Complex Calculations | When responses require mathematical or statistical operations that benefit from dedicated calculation tools. |

Code Execution | When you need to execute code or evaluate programming logic using dedicated code interpretation tools. |

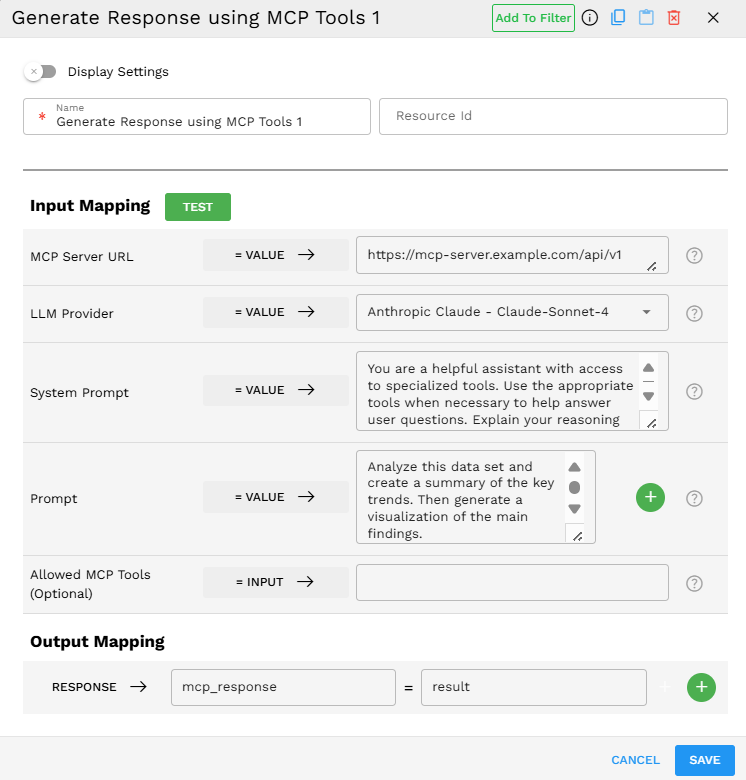

Component Configuration

Required Inputs

Input | Description | Data Type | Example |

|---|---|---|---|

MCP Server URL | URL of the MCP server that provides the tools the LLM will use. | Text |

|

LLM Provider | Select the LLM provider that will generate responses with access to MCP tools. | LLMProvider |

|

System Prompt | Instructions defining how the AI assistant should behave and use the available tools. | Text |

|

Prompt | The user's query that the LLM will respond to using MCP tools. Multiple prompts can be added with the + button. | Text |

|

How It Works

Connection to MCP Server:

Establishes a connection to the specified MCP server URL.

Verifies available tools on the server.

Filters tools if

Allowed MCP Toolsparameter is provided.

Processing User Prompts:

User’s prompt is sent to the LLM provider along with the system prompt.

LLM determines if tools are required.

If needed, tool calls are made to the MCP server.

Tool Utilization:

The LLM requests specific operations from the available MCP tools.

The MCP server executes operations and returns results.

LLM can chain multiple tool calls using previous results.

Tool results are incorporated into reasoning.

Response Generation:

LLM formulates a comprehensive response.

Includes insights derived from tool operations + LLM analysis.

Can explain reasoning and tool usage depending on system prompt.

Example Use Case: Data Analysis Assistant

Scenario: Creating an assistant that can analyze data, generate insights, and create visualizations.

Configuration:

MCP Server URL:

https://mcp-analytics.example.com/api/v1LLM Provider:

OpenAI GPT-4System Prompt:

"You are a data analysis assistant with access to specialized tools. When users ask questions about data, use the appropriate tools to analyze the information and generate insights. The following tools are available to you:

data_analyzerfor statistical analysis,chart_generatorfor creating visualizations, anddata_transformerfor cleaning and restructuring data. Always explain your approach and the significance of your findings in business terms."Allowed MCP Tools:

["data_analyzer", "chart_generator", "data_transformer"]

Conversation Flow:

User Input:

"I have quarterly sales data from our regional offices for the past two years. Can you analyze it to find any significant trends and create a visualization showing the performance of each region over time?"

Assistant's Process:

1. Use data\_transformer to clean/structure data.

2. Use data\_analyzer to identify trends and patterns.

3. Use chart\_generator to create a line chart.

Assistant's Response:

"I've analyzed your regional sales data and here are the key findings:

**Key Trends:**

1. Western region shows strongest growth (18.4% YoY).

2. Southern region has seasonal fluctuations (Q3 underperforming).

3. Eastern region shows declining growth last 3 quarters.

4. Northern region is most stable.

**Seasonal Patterns:**

* All regions show Q4 spike (holiday season).

* Q1 consistently lowest performance.

* Western region’s Q2 exceptionally strong.

\[Visualization of sales performance]

Would you like a deeper analysis of any specific region?"

Benefits:

Specialized data analysis tools process complex information.

Visualizations improve clarity.

Responses include both technical + business insights.

Assistant explains methodology and offers next steps.

Best Practices

Provide clear tool instructions in your system prompt.

Be specific about tool capabilities so the LLM knows when to use them.

Limit tools to what’s necessary for your use case.

Test different LLM providers to see which works best with MCP tools.

Design error handling for tool failures.

Consider tool dependencies when chaining operations.

Balance tool vs. LLM usage – don’t overuse tools unnecessarily.

Ensure MCP server reliability since tool ops depend on it.

Troubleshooting

Issue | Possible Cause | Solution |

|---|---|---|

LLM not using tools | Weak system prompt | Strengthen system prompt with explicit instructions & examples. |

Tool operation failures | MCP server issues | Verify server URL, config, logs. |

Specified tools not available | Tools missing on server | Confirm availability and update allowed_tools. |

Unexpected tool behavior | Misunderstood tool capabilities | Improve prompt with precise tool descriptions. |

Response timeout | Complex/multiple tool calls | Simplify ops or adjust timeout settings. |

Inconsistent tool usage | LLM provider variations | Test alternative providers for better compatibility. |

Limitations and Considerations

Limitation / Consideration | Description |

|---|---|

MCP Server Dependency | Functionality depends on server availability/reliability. |

Tool Availability | Only tools on the server can be used; allowed_tools must match. |

LLM Provider Compatibility | Some providers are better at tool usage than others. |

Latency Considerations | Tool calls add processing time (esp. multiple sequential calls). |

Cost Implications | Additional costs may apply for MCP server/tool usage. |

Security Considerations | Be mindful of sensitive data processed by external servers. |

Tool Evolution | Available tools may change – review configs regularly. |