Summarize Text

The Summarize Text component condenses input text using a large language model (LLM) to generate a concise summary. Users specify the text to summarize, select an LLM provider, and set a word limit for the output. This enables efficient text reduction while retaining key information.

Overview

The Summarize Text component uses Large Language Models (LLMs) to generate concise summaries of longer text inputs. This component is part of the Generative AI group in the Pipeline Builder, helping users create shorter versions of documents, articles, or other lengthy texts while preserving key information.

How to use:

Key Terms

Term |

Definition |

|---|---|

LLM |

Large Language Model - an AI system trained on vast amounts of text data that can understand and generate human-like text. |

Summarization |

The process of creating a shorter version of text that captures the most important points. |

Word Limit |

The maximum number of words allowed in the generated summary. |

When to Use

Use when you need to extract key information from long documents.

Ideal for situations where you need to quickly understand the main points of lengthy content.

Helpful if your goal is to create digestible versions of reports, articles, or research papers.

Use when integrating summarization capabilities into automated document processing pipelines.

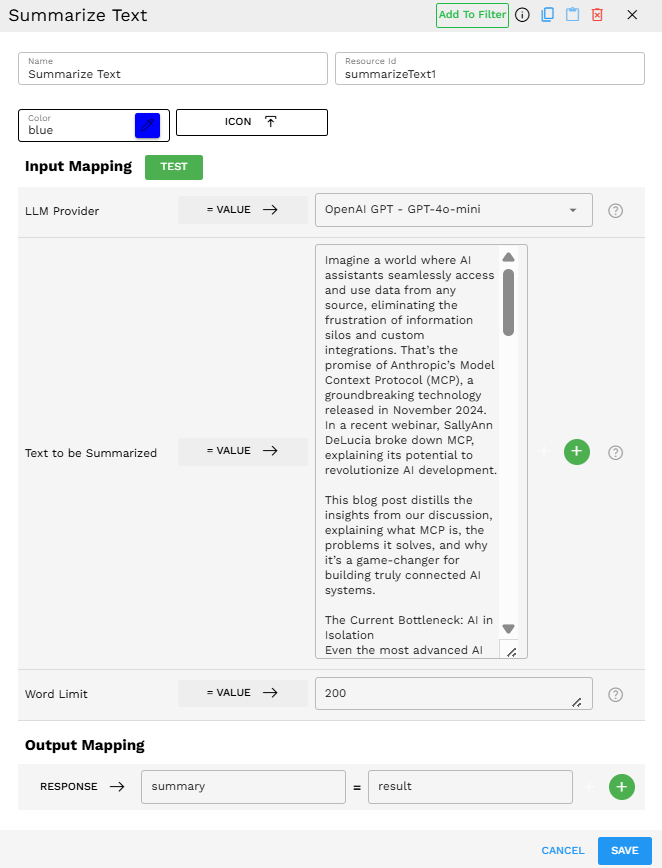

Component Configuration

Required Inputs

Input |

Description |

Data Type |

Example |

|---|---|---|---|

LLM Provider |

The language model service performs the summarization task. Select from available providers in your environment. |

LLMProvider |

|

Text to be Summarized |

The content you want to summarize. |

Text |

|

Word Limit |

The maximum number of words for the generated summary. This helps control the length of the output. |

Integer |

|

How It Works

The component receives the text input and configuration parameters.

It sends the text to the specified LLM provider along with instructions to summarize.

The LLM processes the text, identifies key information, and generates a summary.

The summary is constrained by the provided word limit.

The component returns the summarized text as output.

Example Use Case

Scenario: Summarizing a blog post about Anthropic's Model Context Protocol

Input Text:

Imagine a world where AI assistants seamlessly access and use data from any source, eliminating the frustration of information silos and custom integrations. That's the promise of Anthropic's Model Context Protocol (MCP), a groundbreaking technology released in November 2024. In a recent webinar, SallyAnn DeLucia broke down MCP, explaining its potential to revolutionize AI development. This blog post distills the insights from our discussion, explaining what MCP is, the problems it solves, and why it's a game-changer for building truly connected AI systems. The Current Bottleneck: AI in Isolation Even the most advanced AI models are constrained by their isolation from data. They lack real-time awareness and struggle with fresh information, trapped behind information silos and legacy systems. As Anthropic notes, every new data source requires custom implementation, making scalable, connected AI systems incredibly difficult to build. Enter MCP: A Universal Standard for AI Connectivity MCP is a universal, open standard designed to connect AI systems with external data and tools. Its core purpose, as Anthropic describes, is to replace fragmented integrations with a single protocol, enabling developers to build secure, two-way connections between their AI and any external functionality required to complete a task. Think of it as a "USB for AI," standardizing how AI models interact with tools, databases, and actions, regardless of their source. How MCP Works: A Client-Server Architecture MCP operates on a client-server architecture, consisting of three key components: * Host: The AI system itself (for example, AI applications, LLM agents, Claude Desktop). * Client: The connection handler between the host and servers. * Server: Any program exposing capabilities or data to the host (for example, local files, databases, APIs). Essentially, servers act as "windows" into the external world for the AI system, enabling seamless communication. Impact on Key AI Development Areas MCP's impact extends beyond simple connectivity, fundamentally changing how we approach core AI development tasks. Let's explore how this protocol streamlines and enhances key areas: * Function Calling: MCP standardizes the function calling pipeline, making it reusable and secure. No more custom code for every integration! * RAG (Retrieval Augmented Generation): MCP simplifies the retrieval pipeline, offering declarative access to various data sources. This leads to more scalable and maintainable RAG systems, reducing hallucinations and enabling complex multi-source flows. Why Developers Should Care About MCP For AI engineers and product teams, MCP isn't just a technical novelty; it's a tool that directly addresses real-world development challenges and unlocks significant benefits. Here's why MCP should be on your radar: * Streamlined AI Engineering: Build once, connect many times, reducing boilerplate code and debugging time. * Faster Product Velocity: Focus on functionality, not connectivity, speeding up time-to-market. * Consistent Performance and Reliability: Enjoy standardized, dependable performance. * Reduced Technical Debt: Adopt a unified approach to integrations. * Future-Proofing: Build a stable foundation for future AI capabilities.

Configuration:

LLM Provider: OpenAI GPT - GPT-4o-mini

Text to be Summarized: [Blog post text above]

Word Limit: 200

Output:

Anthropic's Model Context Protocol (MCP), introduced in November 2024, aims to revolutionize AI by providing a universal standard for connectivity, eliminating the challenges posed by information silos and custom integrations that limit AI capabilities. In a detailed webinar led by SallyAnn DeLucia, the protocol was explained as a transformative solution to enhance AI system interactions with external data sources and tools. Currently, AI models face significant limitations due to their isolation from real-time information, necessitating custom implementations for every new data source. MCP addresses this by creating a client-server architecture that fosters seamless two-way connections between AI systems (the host), connection handlers (clients), and external data sources or programs (servers). This setup streamlines function calling and retrieval augmented generation, making processes reusable, secure, and easier to maintain. MCP empowers developers by simplifying the integration of various functionalities, enabling faster product development, reducing boilerplate code, and maintaining consistent performance, which minimizes technical debt. As a foundational tool, MCP not only addresses current development challenges but also sets the stage for future advancements in AI capabilities, making it essential for engineers and product teams to adopt.

Output Format

The output is a text string containing the summarized content:

{"result":"Anthropic's Model Context Protocol (MCP), introduced in November 2024, aims to revolutionize AI by providing a universal standard for connectivity, eliminating the challenges posed by information silos and custom integrations that limit AI capabilities..."}

Best Practices

Set an appropriate word limit based on the original text length and desired summary detail.

Test different LLM providers to find the one that produces the best summaries for your specific use case.